You can find the complete project from this blog post here: https://github.com/BruceMacD/mnemosyne

Like everyone else I've been leveraging ChatGPT to complete basic coding tasks. One annoyance I've been running into is gathering all the relevant information I have sent to ChatGPT in the past to give it the proper context when asking a new question.

After seeing Supabase's ChatGPT documentation interface, I was inspired to leverage a similar combination of tools to store the context of my previous conversations. Whereas Supabase's documentation was stored in a vector database, I could instead store information from conversations with ChatGPT for future reference.

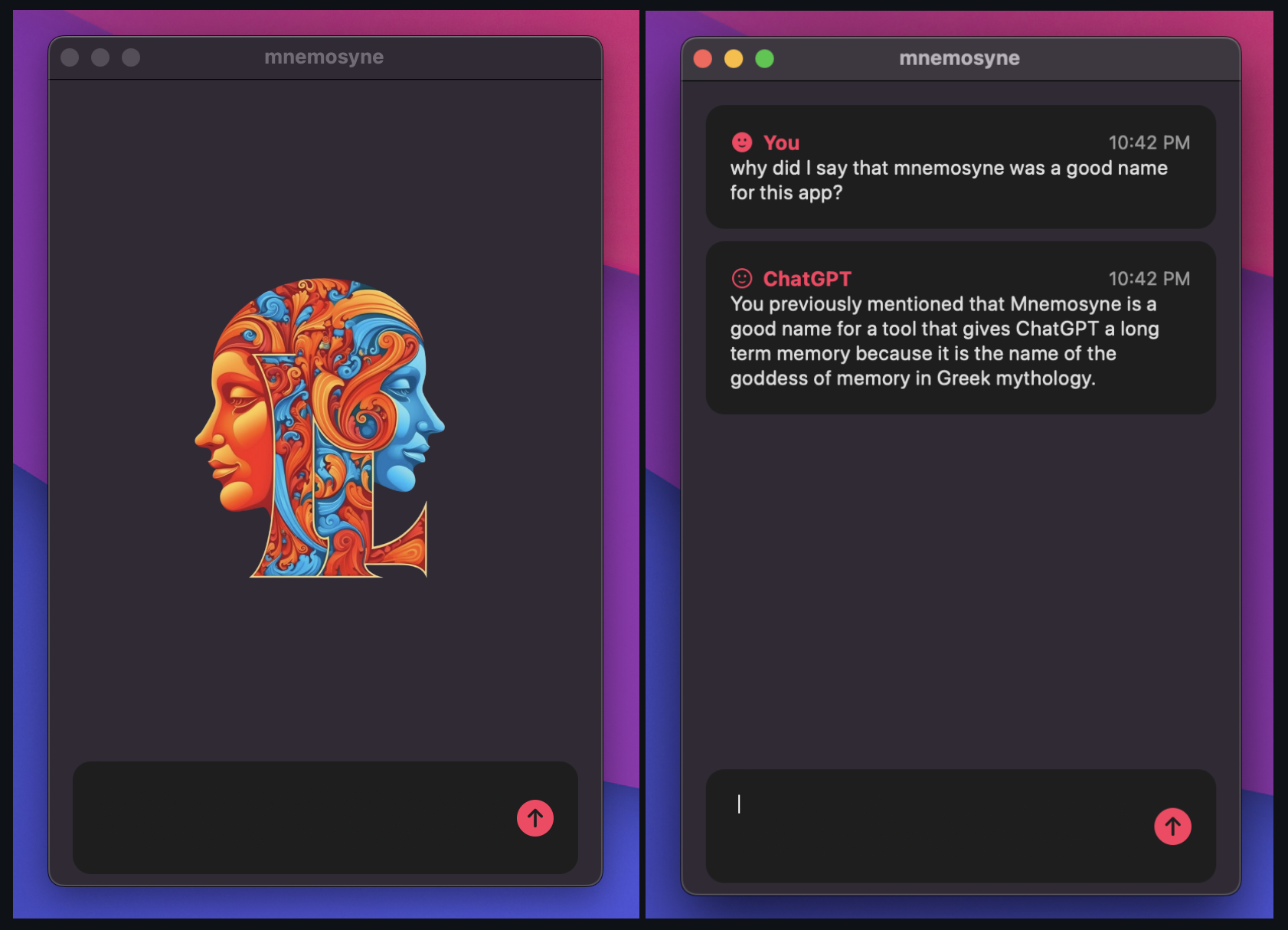

Mnemosyne: Giving Context to ChatGPT

I use a Mac, so I created a simple desktop interface to interact with ChatGPT. Before the prompt is sent to ChatGPT, I wrap the new query with some additional context that is retrieved from a vector database (Milvus) that I'm running locally in a container. The prompt that actually gets sent to ChatGPT looks like this:

Given the queries I have asked previously (in the previous section), and your replies (in the replied section), answer my new query.

Previous section:

(... previous queries I have sent to ChatGPT go here)

Replied section:

(... previous responses from ChatGPT go here)

New query:

(... the actual query)

Overall I've found this to work quite well. ChatGPT understands the format of the prompt and doesn't reference the previous details out of context. I could see OpenAI implementing something like this in the near future, but this is a nice interface for the time being which is pretty economical since Milvus can be run locally.